Are we going in the right direction?

Using the Application Usability Levels to track our field’s progress

Metrics seem to rule our lives. Whether through our daily step counts or metrics used in our performance evaluations, metrics have been injected into everything we do. And in some respects, that is good. Metrics can help us track our progress to a goal - similar to keeping track of milestones. They are a great way to ensure we stay focused and on the right course. And this is where our story begins.

Back in 2016, I served on the Living With a Start TR&T committee. We were asked to tackle a complex problem at the end of our term. The president's office released the space weather operations research and mitigation plan, or SWORM. Part of this plan was to better track and trace how research transitioned from the fundamental, pure research side to the applied and operational side of space weather.

If you know anything about scientists, we tend to be magpies. See a shiny new event? Let's go look at that. Found a new analysis method? Let's try to use it for all the things. We make progress, and because of that curiosity and the fluid path toward discovery, we get new innovations. When reading about a new scientific discovery, it sounds like we take a path as straight and clear as the streets of a nicely laid out gridded city like Washington, D.C. In reality, the way toward discovery is like wandering around the old cow paths in Boston, which have defined the street layouts. You might eventually get to where you thought you were going - but you are just as likely to have found a new end spot that happens to be a lovely square you just discovered. Fun, valuable, but not often efficient when developing a tool or product that others count on.

So a few of us from that group took it upon ourselves to find another way forward. Not something that would replace the creative random walk of much of science, but a framework that would help keep us heading in a predefined direction.

As researchers, we first looked to see if other fields and disciplines had already solved this problem. And to some extent, they had. The first and most famous tracking framework in space is the Technology Readiness Levels. They were developed to track the progress of hardware and its development toward being used successfully in space. It has been successful since the mid-nineties and is used worldwide by governments, academia, and industry. It has helped produce a common language that crosses these institution types and fields. When someone says their product is at a TRL of 6, you know it's highly developed but probably has yet to fly in space.

You might then ask, as we did, would the TRLs work for us? Can they be used to track the progress of science? People have tried. It works sometimes, but things have to be changed to shoehorn science into them to a point where the communication about what someone means by a TRL6 science product is only sometimes known. For instance, most science products won't fly in space. So if you are using the initial definitions for the different TRL levels to track both hardware and software - the ground software will never, by definition, reach a TRL9. This is fine- but it brings in an implicit bias that software only to be run on the ground at a TRL6 (one of if not the last TRL such software would reach) is less advanced than the hardware at a TRL 9.

Okay, so the TRLs are different from what we are looking for. So we then asked if there were other examples of other groups using different tracking frameworks. That is when we happened upon the ARLs or the Application Readiness Levels. Turns out one of the primary developers of the ARL framework was Lawrence Friedl, who was sitting at NASA, not in the heliophysics division, but in the Applied science program under the Earth Science Division.

We invited Lawrence to join us at a meeting to discuss how the ARLs work and how we might apply them, and thankfully he joined us! The first thing he said was that we needed a name change. It's great to have something ready for use, but it's more important that it is used and useable. Thus, the first business order was to start working on developing the new Application Usability Levels.

What was really amazing about working with Lawrence was seeing how both individuals and organizations could use this framework to track progress. He encouraged us to build more flexibility into the system to ensure various projects can use them. As a science community, we have a wide range of different projects and product types. We have instrumentation and hardware; we have many software tools for collecting and processing data from hardware and models and data analysis tools; we have pure research that results in new understandings which impact new research products and result in papers; and we have products that will be used by industry or other forecasters.

Our goal became ambitious: we wanted to develop a tracking framework that could help individual projects and larger organizations and be used by various project types. The need for such a framework to be so diverse meant that we needed a diverse group of individuals to be a part of the development... and that is what we got.

Through the magic of telecons (pre-video teleconferencing), the dedication of people working at odd hours to support meeting times literally half a world away, and the remarkable collaborative tool called overleaf, we brought together 24 individuals from 20 institutions and 6 different countries. Some were experimentalists and space weather forecasters who worked at operational institutions. Others were pure fundamental scientists, data providers, and tool developers.

We argued a lot over definitions. Who would have thought we couldn't even agree on what a single word meant? This led to the development of our vocabulary - with an admission that some of the terms would mean different things to others. For the use of our paper and the development of this framework, the words provided would have the specified definition describing our framework. In the article, we encouraged everyone to develop their own list of terms and definitions needed for their project.

Once we agreed on words and language, we looked at the stages and milestones in the ARLs and TRLs. Finally, we discussed lessons learned from the previous frameworks and how we could build enough flexibility to ensure all the AUL stages were usable by all.

It became evident early on that communication is critical - communication across the team and between those developing the product and those who will use it. However, the emphasis on communication pathways was limited in the ARLs and completely missing in the TRLs, so we made it explicit and continuous across all phases.

Communication has many forms it can and should take. Sometimes communication may be scientific papers or tech reports; other times, it might be emails and phone calls or just clear documentation of the product, the testing, and a user guide.

We also found that ensuring we had outs along the way was crucial. Thus, off-ramps and guard rails were put in to ensure we weren't going down a path that would not get us to our final position. These aren't really off-ramps but detours back through the initial phases where more work could be done at the fundamental research level, validation level, or wherever work is needed to start back down that straight path toward the intended use.

This meant we needed to include a test of the feasibility, validation, and verification at multiple locations throughout the process. This might seem tedious, but it is essential as the product should be validated as it moves and develops on the different systems where it may be used (e.g., on your own personal computer vs. on a forecasting computer at places like NOAA).

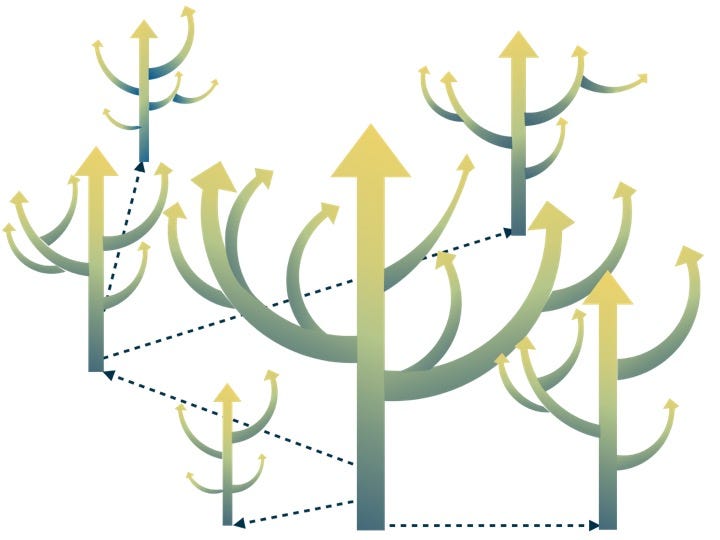

Once we gathered the identified needs and lessons learned, we followed a similar layout to the ARLs and TRLs. We have 9 levels and three phases. The first phase (and the first 3 levels) focus on developing the idea, finding and communicating with the person or group using the end product, and determining if the project is feasible. The second phase (levels 4 - 6) focuses on the product's complete development and testing. The third phase (levels 7 - 9) takes the product from the developer's/researcher's side and transitions it to the final location, delivers all needed documentation, and continues ongoing validation in this end state.

Okay, great; we have a framework with many milestones and metrics that can be gathered. How does this help an individual and an organization with tracking progress? Well, that's where our most recent paper comes in.

How this might help an individual is pretty straightforward. By using this for your product development it helps to keep you and your team on track, it encourages clear communication about expectations for what the project can and can't do, and it helps communicate to the broader community about what your project does, how it performs, and at what stage of development your project is at. Awesome! Please use it and let us know how it goes! We expect that changes will be needed, tweaks here and there, and lessons will be learned and should be applied. So we invite you to work with us as you find any improvements we should make!

But how would an organization use this? Good question! It depends on the organization, but most will likely use it similarly. The first and easiest way is to keep track of each project. Are they meeting goals? Are they all stuck in the same place - and might need resources to overcome or remove the roadblock? Are some sprinting ahead but need to complete specific steps such as the communication milestones or validation milestones (often difficult ones that only some feel are necessary - but are so crucial for reproducibility and gaining of trust in any product). And from a managing/supervising standpoint, those are all important reasons for using a tracking framework.

Another reason to use a framework such as the AULs, depending on your institution, is to keep an eye on the health of your program. A healthy program must have a good balance of new projects, those making steady progress, and those being completed. By using the same framework and thus language to discuss a wide range of projects, one can get a quick high-level overview of your program's health and continued progress. The applied science program at NASA does this well. They provide a real-world example of how tracking frameworks can be used this way. By tracking multiple projects, you can identify potential roadblocks and address them. This might be through the infusion of more time and effort, procurement of new systems or data, or identification that it is unfeasible at this time to currently remove the roadblocks and help people transition to other activities.

Suppose you are looking at multiple projects all working towards the same goal. In that case, the AULs provide a straightforward way to communicate how far along the progress is and how well the different projects meet the requirements.

Tracking frameworks like the Application Usability Levels benefit individuals, projects, and organizations. We hope you find the Application usability levels beneficial for you and your work. Please let us know what works and what doesn't.